Introduction

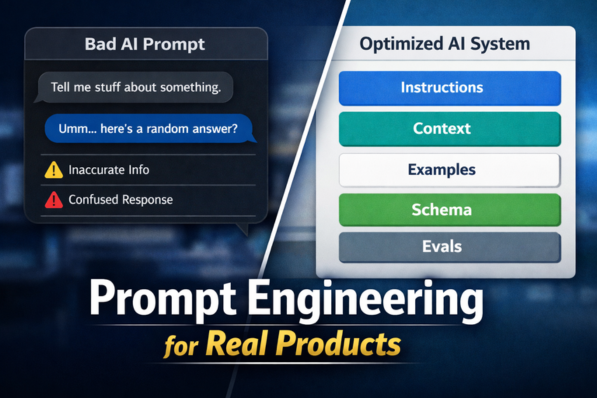

Prompt engineering is often described as a way to “talk to AI better,” but in real products, it is much more than that. It is the discipline of shaping instructions, context, examples, and output rules so a model behaves reliably inside an actual application, not just in a demo.

For teams building AI features into customer support tools, internal copilots, search products, workflow automation, or structured data systems, prompt engineering is one of the main levers for quality, safety, and consistency. It matters because production systems need repeatable outputs, clear boundaries, and measurable performance, not just impressive one-off responses.

What prompt engineering means in a real product

In a product setting, prompt engineering is the process of designing the model’s instructions so it can do a defined job under real constraints. That usually means specifying the task clearly, adding the right context, controlling the response format, telling the model what to do when information is missing, and iterating based on evaluation results. OpenAI, Anthropic, Google, and Microsoft all describe this as a structured practice rather than a one-time writing trick.

A strong production prompt usually includes:

- a clear role or task

- business context

- allowed and disallowed behaviors

- examples of good outputs

- formatting rules

- fallback behavior for uncertainty or missing data

This is why prompt engineering sits close to product design, backend design, and quality assurance. In practice, it is part UX writing, part systems design, and part testing workflow. That is an inference from current vendor guidance, which consistently treats prompts as controllable system behavior rather than casual natural-language input.

Why prompt engineering matters more in products than in experiments

A quick playground result can look good even when the design is weak. Real products expose weaknesses much faster. Users phrase requests unpredictably. Data may be incomplete. Edge cases appear daily. Compliance requirements may limit what the model should say. Outputs may need to fit JSON schemas, UI fields, or downstream automation.

That changes the goal. The question is not “Can the model answer this?” The better question is “Can the model answer this correctly, safely, consistently, and in a format my system can trust?” Structured outputs and evaluation workflows exist precisely because product teams need reliability, not just fluency.

Real product use case 1: Customer support assistants

One of the most practical uses of prompt engineering is AI-assisted customer support. Here, the model may need to answer questions from a knowledge base, summarize tickets, draft replies, classify intent, or decide when to escalate to a human agent.

A weak prompt might simply say, “Answer the customer.” A production-ready prompt is more precise:

- answer only from approved support content

- do not invent policies

- cite or reference source material if available

- ask a clarifying question when the request is incomplete

- escalate billing, legal, refund, or safety-sensitive issues

- keep tone polite and concise

This kind of design reduces hallucinations and helps support workflows stay aligned with company policy. The improvement does not come from “fancier words.” It comes from narrowing the task, defining fallback behavior, and giving the model better context.

Real product use case 2: Data extraction from documents and forms

Another strong product use case is extracting structured information from unstructured text such as invoices, contracts, support emails, resumes, or onboarding documents. In these flows, prompt engineering is tightly linked to output reliability.

For example, a business may want the model to return:

- customer name

- invoice number

- due date

- total amount

- confidence or missing fields

If the output is free-form text, downstream systems become fragile. That is why schema-based outputs are now important in production AI stacks. OpenAI’s Structured Outputs guidance explicitly focuses on making model responses conform to a supplied JSON schema, which helps reduce formatting failures in application pipelines.

In product terms, prompt engineering here means telling the model:

- which fields to extract

- what to do if a field is absent

- how to handle ambiguity

- what exact schema to return

- what not to infer without evidence

Real product use case 3: Internal knowledge copilots

Many companies build internal assistants for policy lookup, technical documentation, HR questions, or workflow support. These are useful, but they can become risky if the model sounds confident when the source material is thin or outdated.

In this setting, prompt engineering should define clear behavior such as:

- use only the provided retrieval context

- say when the answer is not supported by available documents

- separate confirmed facts from suggestions

- summarize in simple language

- return links, citations, or document references when possible

This is especially important in enterprise environments, because a helpful-sounding answer is not enough. The answer must be grounded in available company knowledge and must fail safely when evidence is missing.

Real product use case 4: Workflow automation and agents

Prompt engineering becomes even more important when the model is not only writing text but also calling tools, making decisions, or driving a multi-step workflow. Anthropic’s guidance and OpenAI’s current docs both frame prompting as part of a broader system that can include tools, structured steps, and evaluation loops.

Examples include:

- triaging incoming leads

- routing support tickets

- generating SQL or search queries

- preparing CRM updates

- drafting meeting summaries with action items

- deciding when to call an API or ask for more information

In these systems, prompt design needs explicit control points:

- when to use a tool

- when not to use a tool

- what data the model can trust

- how to report uncertainty

- what counts as task completion

Without those controls, the system may appear smart but behave inconsistently under pressure.

What good prompt engineering usually looks like

Across the major platform guides, several practices appear again and again.

1. Be clear and specific

The model performs better when the task is sharply defined. Vague prompts create vague behavior. This is one of the most consistent recommendations across OpenAI, Anthropic, Google, and Microsoft documentation.

2. Provide structure

Using sections, tags, templates, or system instructions helps the model separate instructions from context and examples. Anthropic specifically recommends XML tags for complex prompts, while Google documents system instructions and structured prompting strategies for production use.

3. Use examples when the task is nuanced

Few-shot prompting helps regulate tone, output shape, and edge-case handling. This is especially useful for classification, transformation, extraction, and style-sensitive tasks.

4. Define what happens when the model is unsure

Microsoft’s guidance explicitly recommends adding a “when unsure” policy. This is one of the most practical habits for real products, because ambiguity is normal in production traffic.

5. Test prompts with evals, not intuition

Prompt quality should be measured against real examples. OpenAI’s Evals guidance frames this as a repeatable cycle: define the task, run evals, inspect failures, and iterate.

Common mistakes teams make

A frequent mistake is treating prompt engineering as copywriting. In reality, strong prompts are closer to executable specifications. Teams also fail when they overload prompts with too many goals at once, skip edge cases, or rely on manual spot checks instead of evaluations. These are practical inferences from vendor documentation that emphasizes clarity, separation of concerns, and iterative testing.

Other common mistakes include:

- asking for structured data without enforcing a schema

- not defining refusal or fallback behavior

- mixing instructions and user data in a messy way

- changing models without re-testing prompts

- assuming one good demo means the system is production-ready

That last point matters because model availability and recommended APIs can change over time. For example, OpenAI’s deprecations and migration guidance show that production systems need maintenance, not just initial setup.

A practical framework for product teams

A simple way to think about prompt engineering for real use cases is this:

Define the job

Be precise about what the feature is supposed to do and what “good” looks like.

Constrain the behavior

Tell the model what sources to use, what not to do, and how to behave when evidence is missing.

Control the output

Use templates, tags, or JSON schemas when the response feeds a UI or backend workflow.

Evaluate with real data

Use realistic samples, adversarial cases, and failure examples, then iterate.

Monitor and update

As products evolve, prompts, models, tools, and guardrails need re-testing.

Risks and limits

Prompt engineering is important, but it is not a magic fix for every AI quality problem. Anthropic’s overview explicitly notes that not every failure is best solved through prompt engineering alone; sometimes model choice, cost, latency, retrieval quality, or tool design matters more.

That is an important reality for teams. If the underlying context is wrong, the retrieval is weak, or the workflow is poorly designed, better wording alone will not rescue the system. In mature products, prompt engineering works best alongside good data pipelines, retrieval design, guardrails, schema control, and evaluation systems.

Conclusion

Prompt engineering for real product use cases is not about writing clever prompts. It is about building dependable AI behavior inside an application that real people will use every day. The best prompts are clear, constrained, testable, and tied to the product’s actual workflow.

For product teams, the most useful mindset is simple: treat prompts like part of your system design. Write them carefully, measure them honestly, and improve them with real user cases rather than assumptions.

Key Takeaways

- Prompt engineering matters most when AI is part of a real workflow, not just a demo.

- Good product prompts define task, context, boundaries, uncertainty handling, and output format.

- Common use cases include support assistants, data extraction, knowledge copilots, and workflow automation.

- Structured outputs and evals make AI features more reliable in production.

- Prompt engineering helps a lot, but it works best with strong retrieval, tooling, and testing.

Verification Note

This blog is based on publicly available and verifiable information from official documentation and reputable platform guides. Unsupported claims, invented statistics, and unverified quotes have been avoided. Some examples in the article are generalized product patterns rather than references to one specific company case.

References

- OpenAI Help Center, Best practices for prompt engineering with the OpenAI API.

- Anthropic Docs, Prompting best practices.

- Google Cloud Vertex AI Docs, Introduction to prompting.

- Microsoft Learn, Prompt engineering techniques.

- OpenAI API Docs, Working with evals.

- OpenAI API Docs, Structured model outputs.

- Google Cloud Vertex AI Docs, Overview of prompting strategies.

- Google Cloud Vertex AI Docs, System instructions.